If you split white light by wavelength, you get a rainbow. If you take a picture of the result, you get, well, a picture of a rainbow.

But if you take a picture of the same rainbow with two different cameras, you may also get two different results.

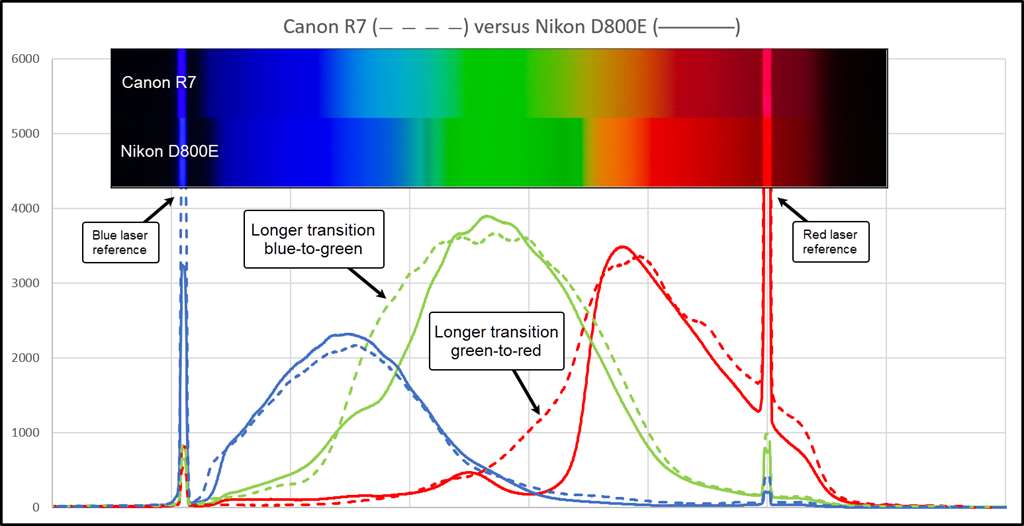

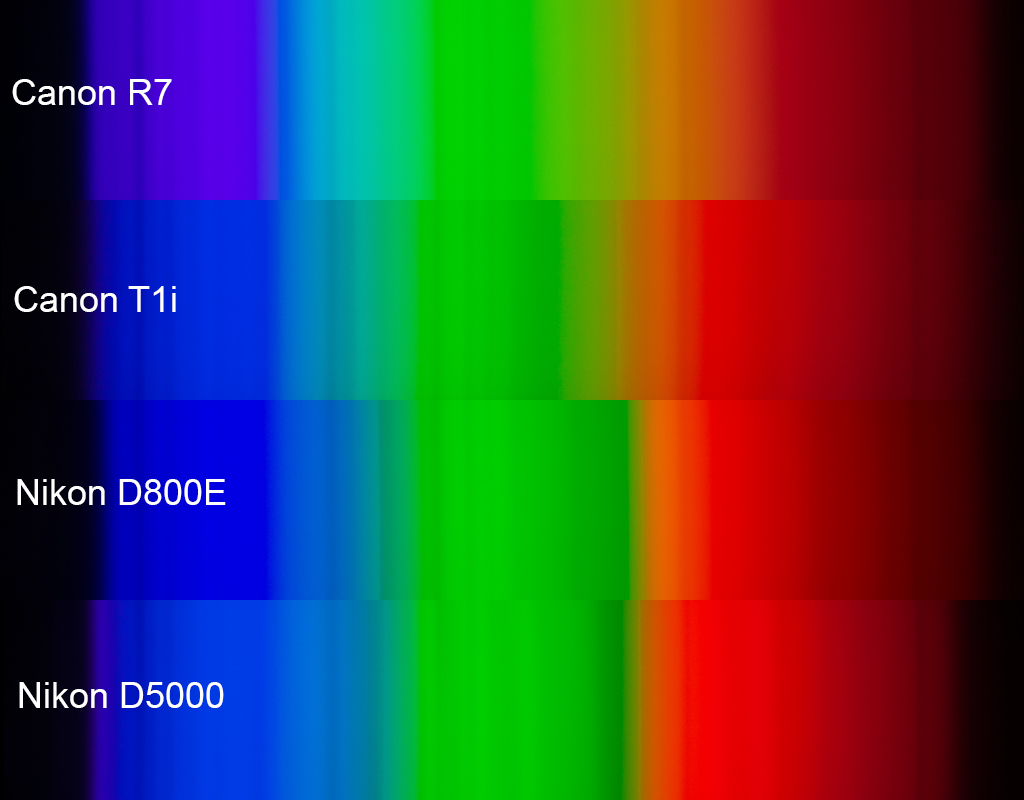

The diagram above illustrates this effect for two specific cameras.

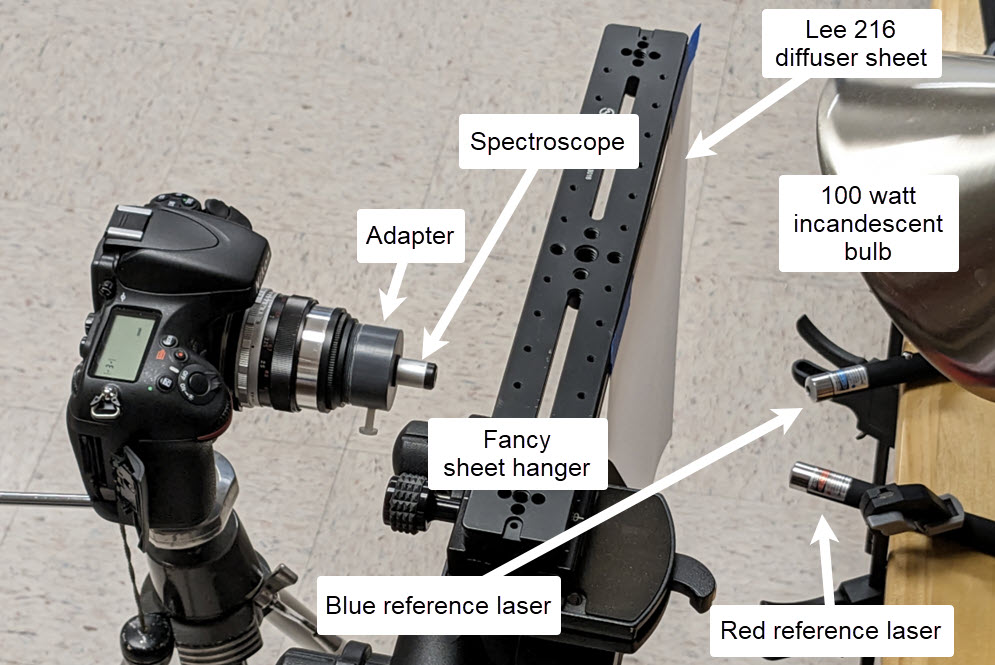

Here's the setup for shooting:

Very quickly, what you're looking at in the diagram at top are plots of the raw sensor data, aligned with RGB spectra as rendered by Photoshop Camera Raw using Tungsten 2850K with no other adjustments except exposure tweaking to match brightness.

Getting to this point was a bit of a journey. It started when I became intrigued by Lou Jost's report on using Fraunhofer lines as calibration points for a small spectroscope. So I bought a similar spectroscope and made an adapter that would let me use it with whatever camera I had handy.

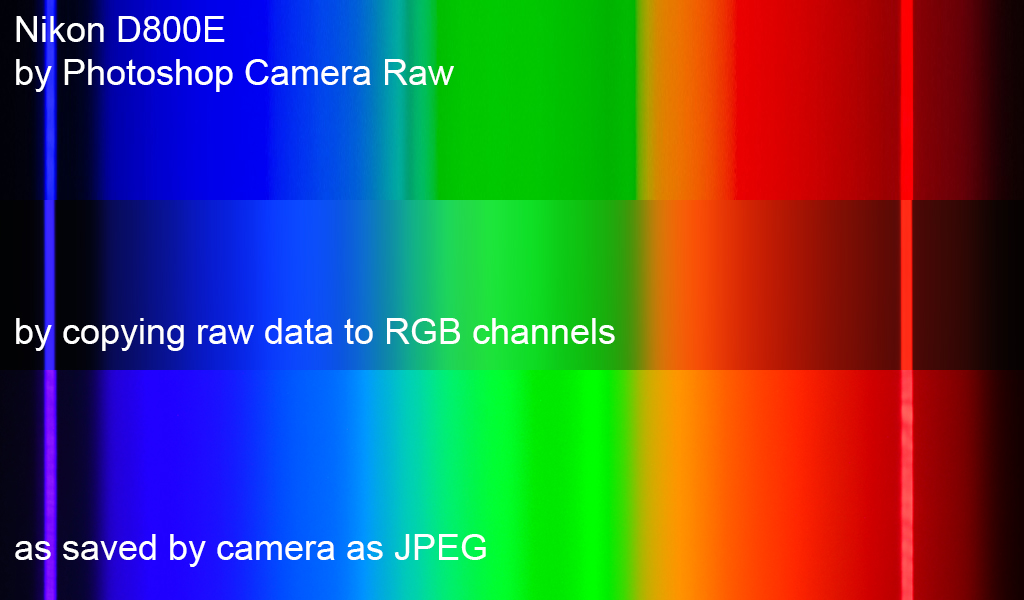

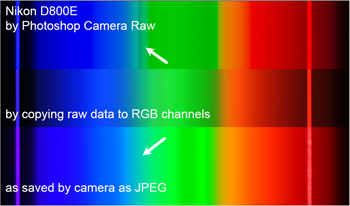

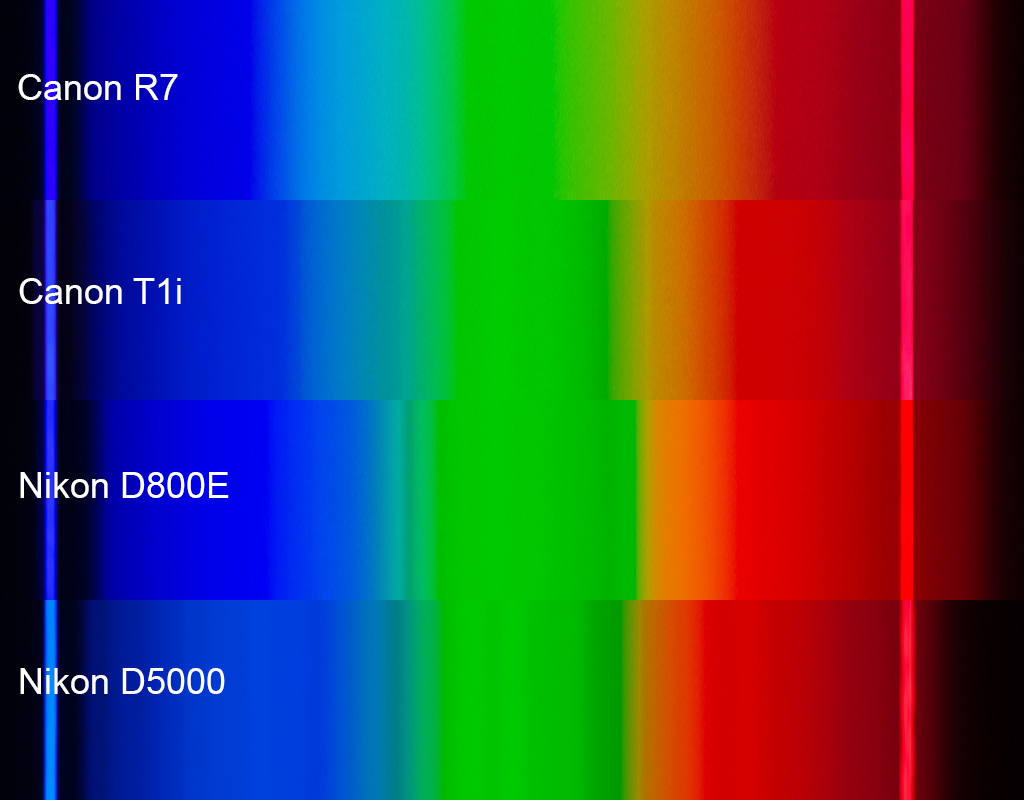

Of course the first thing I did was to point a camera at the sky and snap some pictures. The pictures looked oddly different from what I expected. So I pointed another camera at the sky, snapped some more pictures, and compared the results. With the cheap spectroscope, the Fraunhofer lines are not crystal clear, and in fact the pictures looked so much different from each other that I was not sure I was getting the Fraunhofer lines matched up correctly. Here is that situation, looking at a white cloud and again rendering with Camera Raw:

I wanted something more definite for wavelength markers; lasers came to mind. For just a few dollars, Amazon sent me a set of three laser pointers, one each red, green, and blue, sold as cat toys! The red and blue ones worked great, so I repeated the exercise with a studio setup using incandescent lighting to get a nice smooth repeatable spectrum. From that, I got this:

The differences in smoothness were striking, so I drilled down into the raw files using some purpose-built Python code. It turns out that by importing rawpy and cv2, the task of extracting raw values from the separate RGB photosites takes only a dozen lines of code. That got me hooked, and thus began a long chain of small improvements that ended up with ballpark 100 lines of code that read the raw files, automatically found the reference spikes, and used those to export normalized data and images to facilitate comparisons.

Based on these spectra, it is obvious that there are big differences in spectral sensitivity between various cameras. The transitions between blue/green and green/red are particularly striking. When my eye looks through the spectroscope, I see smooth transitions: red>orange>yellow>green>cyan>blue>indigo, all in smooth transition. But when I look at the images made by the cameras and software and displays, "lumpiness" seems to be the order of the day. OK, fine, I guess smooth must be difficult.

But then I had to wonder, if the spectral responses are lumpy, and spectral yellow seems especially dicey, then why is it that all these cameras can be talked into giving a decent picture of a bright yellow flower?

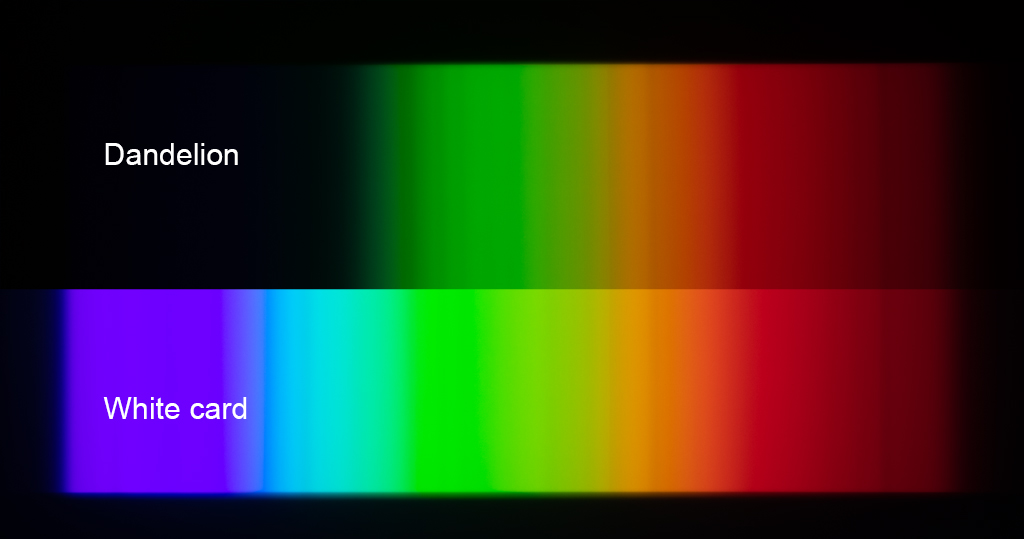

The answer to that question is suggested here:

Comparing the spectra from the white card and the dandelion, it's now clear that spectral yellow plays hardly any role at all in the appearance of the dandelion. Instead, the yellow of the dandelion is achieved by simply removing all the blues, leaving the entire green+red part of the spectrum to be reflected at nearly full intensity. In retrospect, it's clear this has to be true for anything that is bright yellow in reflection. That's because if much more than the blues were removed, then the total energy would be significantly reduced and the color would no longer be "bright".

I think that's a good stopping point for now: cameras vary; spectra are more challenging than dandelions.

--Rik