Rik - I read about the pins and panos some years ago now, and got my pins out then, yes it helps to see it!

It was for me a little eureka moment to tie up the reason why spiky highlights look sharp through several layers in a stack, and move in different directions. Result! Thanks to everyone.

Now all I need is a photochromic layer on my objectives so the brightest parts are dimmed and each point on the subject is seen by the whole lens. In fact a programmable lcd layer would be useful – we could use it to stop the lens “seeing round” things when it’s not convenient

It would be nice to get a handle on how some of the unwanted

colours are actually created in the first place.

Cap’n Fwif, I assume your colours are oxides.

Stolen from Wikipedia:

“ If steel has been freshly ground, sanded, or polished, it will form an oxide layer on its surface when heated. As the temperature of the steel is increased, the thickness of the iron oxide will also increase. Although iron oxide is not normally transparent, such thin layers do allow light to pass through, reflecting off both the upper and lower surfaces of the layer. This causes a phenomenon called thin-film interference, which produces colors on the surface. As the thickness of this layer increases with temperature, it causes the colors to change from a very light yellow, to brown, then purple, then blue. These colors appear at very precise temperatures, and provide the blacksmith with a very accurate gauge for measuring the temperature.”

I assume a dime is a nickel alloy. It would be interesting to clean one, see what happens, then gently heat it. If you etched it, in an appropriate metallurgist’s acid, you’d probably see more of the underlying structure. (That removes a little metal, not just oxide)

I imagine the “sparkle” comes from the flat shiny metal under the oxide, I don’t know if any diffraction/refraction lensing effects could be generated from such a thin layer.

The gamma distortion thing you mention, which I was first made aware of by Rylee Isitt, here, got me thinking about what might happen for us too. (Bear with me..)

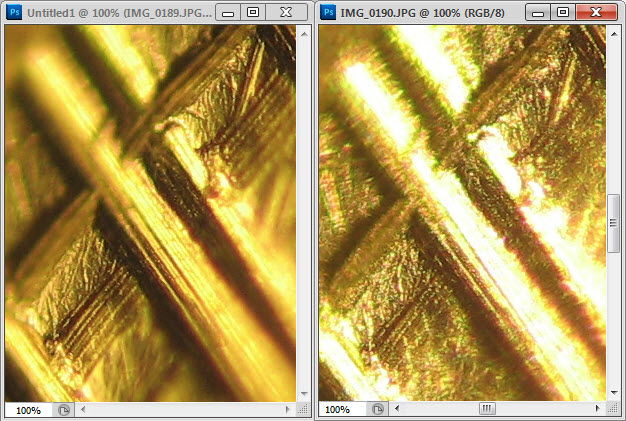

In Rik’s bug shot, there are dark parts and light parts, each side of pits on the surface, moving about. Some of that may be due to the notions discussed above.

I’m thinking that the light parts can be seen to move about, but not the dark ones, largely because we can’t see what’s dark. Light + dark = light, dark + dark = invisible! So if the dark parts move, it wouldn’t be so obvious.

On from there, when something goes out of focus, it’s blurred, so the intensity is spread across the image. But how does that averaging work? I don’t know, is it an arithmetic sum of normal distributions, or geometric, or logarithmic, phase aware or some horrible polynomial function? As well as simply being oof, there are aberrations going on as well which blur the feature. What do they do? Does it matter if the rays go through different parts of the lens?

Then the blurs all go through a stacking algorithm. Lord alone

knows what that does with different intensities.

What we see back at the sensor or output file is the result of all that.

The point (oh yes, the point) which is troubling me is whether the image processing (optical and computational) procedure puts the peak intensity in the right place.

If you draw intensity/position plots and average them, the peaks can move about sideways if the treatment of the numbers changes.

It's probably not a big deal at all but we do have bright specular highlights.

It's somewhat similar issue to how greys are treated in the Gamma Error problem.